By now you probably know that I’m a fan of A/B testing (also called split testing).

It is a “scientific” way of taking the guesswork out of your online marketing efforts.

But what would happen if I told you that A/B testing can actually lead to a dramatically lower conversion rate, even when the numbers are showing you that it’s a raging success?

Yeah, it would frighten me too!

In this article I’m going to share a few things that happened to me this week to make me re-think how I go about some of my split tests.

*Dramatic, ominous music*

What is A/B testing again?

In case you’re not sure, A/B testing is where you run an experiment on your sales page, website or blog whereby you change one element and see if it outperforms the original.

For example, you might have an individual page on your blog (like I do) where you ask people to subscribe to your mailing list. On that page you might run a test to see whether a red submit button out performs a green one. Or you might change the input text (that’s the text on the button) from “Submit” to “Get started!” and see which works best.

The amazing thing about A/B testing is that you often get results that really surprise you. I remember hearing once about some Internet Marketers who removed their social proof from their sidebar and saw conversions skyrocket (if anyone remembers that article please let me know). That is a really surprising result because marketers are always telling us that social proof boost conversions.

If you’d like a really cool introduction to split testing, WIRED has an amazing article about how Barack Obama used it with great success in his first election campaign.

Two A/B tests that I ran last week that went badly wrong

Okay so you’re probably pretty keen to find out about my horror split testing story. Well, it’s not all that bad but it did kind of make me re-think the way I go about my testing a little bit.

1. Split testing my pop-up opt in form

The first A/B test that I started last week was in conjunction with my post about AWeber. In the post I mentioned that I was going to start a split test on my pop up forms to show everyone how much valuable information can be gathered (if you’d like to learn how to do that here’s a video).

Well, that was where the problem started. After a day or so I checked the split test and saw this:

Quick explanation if this is new to you.

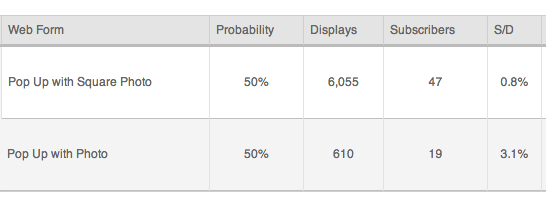

As you can see I’m running two different pop ups. My usual one is the bottom one and the new version I’m testing has a square photo and is much narrower.

Probability indicates the percentage split between these two versions. So half of people will see one version, the other half see the other.

Displays shows how many people have actually seen it. (Problem)

Subscribers show how many people have signed up with that form. (Hmmm)

S/D is subscribers divided by displays. This is your conversion rate.

What does this mean?

As you can tell, the displays here are way off. Which means that the rest of the numbers are kind of useless. The conversions rate of my original pop up at 3.1% is really quite good but it’s seeing far too few displays to choose it as a winner over the new one.

More to the point, I can’t tell if the displays are incorrect or just the numbers that I’m seeing. So there really is no way to tell what is what.

We’ll come back to more conclusions once the next part is discussed.

NOTE: I contacted AWeber about this and they said that there is a temporary problem with the split testing measurements which the techs are trying to resolve quickly.

2. Split testing my HelloBar up the top

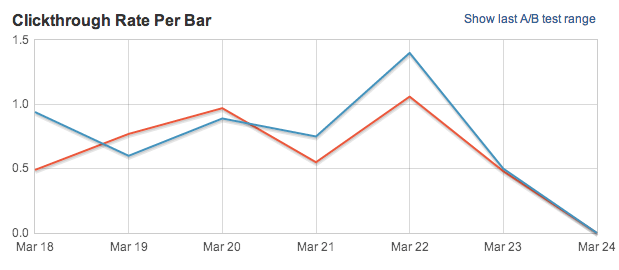

The little bar that you see at the top of my blog is called a HelloBar. It’s a rather expensive ($30 a month for my plan) but freaking awesome little thing developed by Neil Patel that lets you do a lot of cool things like measure the click through rate, run and A/B test between two bars and see which works better, etc.

I’m currently doing a little split test between two bars, one blue and one orange to see which gets the better click through rate to my post about starting a WordPress blog.

I always run these HelloBars the same way; two variations of the same message, see which gets the most clicks, choose it as a winner.

But when I noticed that the latest successful ad coincided with a drop in real conversions I started re-assessing the way I did things. And that is the crux of this article.

How A/B testing decreased my conversions

Now, any seasoned internet marketer will laugh at the obviousness of this. But it’s something I still wanted to share because, even though I’ve been testing for a while, I still made the mistake.

The thing about A/B testing is that it is all pointless unless you are tracking conversions.

Click through rate? Useless.

Traffic numbers? Useless.

Think about it for a second.

I could do a split test between two pop up opt-in forms. One of them could be my regular “Join my mailing list” thing and the other could say “Enter your email and get $500”.

Obviously, the $500 version is going to get more subscribers.

But it will also have a massive rate of attrition. People will get their money (if I’m being serious) and then unsubscribe. They will be of absolutely no value to me whatsoever.

And so it was with my various split tests around my blog. I was getting so focussed on seeing bigger numbers and more clicks that I forgot to measure conversions. And that is a really big problem because it wastes time and money and can cause you to lose a lot of earning opportunities.

Goals and authentic tracking are key

What this really comes back to is setting clear goals/outcomes for your split testing and ensuring that you have authentic tracking in place for those goals.

This is very difficult when the product that you’re selling is not your own. You need to be able to do things like add a tracking pixel onto the sales page to see which traffic is converting into customers – a pretty common problem for some affiliate products.

So what’s the answer?

Sympathy? No.

Actually, I think the starting point is to begin thinking about what you really want from the various activities that you do on your blog.

For example, if you are trying to get more email subscribers then you want to think about what you really want them for. For example, you might have 100,000 people on your list but:

- Do you have good open rates?

How many of those 100,000 subscribers are opening your emails? - Are they engaging?

Of the people who do open those emails, how many people are engaging with your content or whatever it is that you are putting out there? - Are they unsubscribing or flagging you?

Even worse than not opening, are they just unsubscribing from your list or flagging you as spam? - Are they buying?

Lastly, how do those email subscribers go when it comes to purchasing your products or whatever it is that you are promoting?

Once you have figured out what you really want to achieve (goals) you need to figure out a real way to track that stuff.

In your email marketing software you might do it by segmenting your lists based on which sign up form they used. Of course, that is also problematic because other factors like your mail out, time of day, offer, etc. will also affect the results.

If it is a landing page then you can use Visual Website Optimizer to create two versions of the same page and see which one performs better. Again, this is only really useful if you can track people to the final sign up page.

Do you think about numbers or conversions?

I would be really interested to know how many Tyrant Troops think about the numbers they are getting, or how many conversions those numbers are leading to. How many of you are tracking and measuring conversions on your blog? If you have any insights or tools please leave me a comment.

© Photographer: Igordutina | Agency: Dreamstime.com

I am new to split testing, although I plan to try it out later this week by sending 2 different mailouts for my next blog post.

I have noticed however that I get far more opens and clicks to blog posts which have clickbait titles – which seems to indicate that a lot of my subscribers are just casual readers and don’t visit my blog unless something catches their eye.

I don’t know how normal this is or if it’s a problem in any way? Maybe you can offer an insight into this Ramsay?

Also, is there any possible way to split test giving my readers instructions at the end of an article to either comment or share? Obviously not through Aweber, but via another method? Asking them to share seems to work.. but I don’t know if they would be sharing anyway.. or if it puts people off from doing so. (looking needy?)

Thanks.

Hey Jamie.

What do you mean by click bait titles? Like changing the main title so it’s more “BuzzFeed” like?

Good question on the last one. I ask for shares occasionally but kind of limit it to a gut feeling when I’ve created something really good – or the community has been involved.

For example – a recent article I wrote had the title ‘7 Stupid Life Rules You Should Stop Paying Attention To’ which I had changed from my draft title of ‘7 ways to challenge conformity’. So I changed the title and the sub headlines to something catchier.

Same thing – just overly dramatic to catch peoples attention.

I suppose I could have used both of those titles in the email header from Aweber to track how each title converts?

Yeah I do that sometimes – test two different titles. The problem then is gauging who clicked through and then who engaged more…

Great point. This is something I never would have thought about in my current stage of blogging. Thanks, Ramsay!

Hope it helps, Kevin. Thanks for leaving a comment. Appreciate it.

It definitely helps. I’ve had terrible conversions with my subscribe form and I haven’t put any effort into finding out why. This has sparked the desire to investigate and start thinking about what I wasn’t for my subscribers. Also, you use aweber, which requires the user to confirm their subscription before they actually get added to your list. I choose a service that specifically does not do this as I was getting terrible results with follow-through – people weren’t confirming their subscriptions. Could this actually hurt me in the long run?

I think it could hurt your deliverability rates. I’m not 100% sure. You defo do want the people on your list to want to be there, though.

Took a quick look at your site – I can’t actually see the opt-in form…?

That’s another thing… My firm is also only in a modal dialog on the second page visited. I didn’t want to seem pushy or desperate so I didn’t put it on the first visited page. Is that bad practice? 🙂

Get it on the front page, Kev! 🙂

Top of your sidebar. And consider a dedicated page in your menu where you talk about how people can subscribe and the benefits they’ll get from it.

Form – autocorrect strikes again!

Excellent advice! You’re a gold mine! Thanks, Ramsay!

Kevin,

I use Aweber and I have turned OFF the confirm subscription function – my subscribers get taken straight to my upsell page and start getting my emails.

However, after a while I’m going to turn ‘confirmation’ back on, to see if the subscribers are more engaging or not.

Just thought I would let people know that!

Thanks,

Claire

I thought it was in AWeber’s policy that you had to have that on?

This is what they say in the Web Form settings:

“If you prefer, you can disable Confirmed Opt-In for people who sign up using a web form that you create and place on your site.

However, we strongly encourage you to use Confirmed Opt-In for your web forms.”

But its still your choice.

Claire

Hi,

I’ve just started tracking and I use AdTrackzGold. This is a great software because I put a new tracking code in each of my promotions (cloaked) and it tracks:

Campaigns, Raw Clicks, Unique Clicks, Actions (sign-ups),Sales, Avg CPC, CPA, CPS, Cost, Revenue, Profit, R.O.I.

So at a glance I can see how many people see my squeeze page, how many subscribed and how many bought my upsell.

If I pay for advertising it tells me my ROI and I can see if it is worth sticking with that advertising method or not.

I haven’t done any split testing yet though, but I will soon (it has a split testing section in AdTrackzGold too)

Thanks,

Claire

Nice one Claire! Any ideas on tracking a product where you don’t own the confirmation page?

Well that’s the problem isn’t it.

AdTrackzGold has a function to track affiliate clicks, actions and sales but the merchant has to put your code on their ThankYou page (as if that’s going to happen!)

Claire

I have been offered that by a few of mine but not all of them. I’m sure there is a way – just tricky!

That sounds awesome! I am bookmarking that service. 🙂

Kevin,

It’s not even a service, it’s a one-off payment of $67 for the WP Plugin version (I don’t have this as I bought mine years ago, I have the script).

Thanks,

Claire

Oh cool. I will definitely be investing in that in the future.

I’m not a big fan of split testing, mainly because it’s a complicated task to do, but surely it can bring in a lot of extra cash to the business, if done right.

You bring a great point in this article that by increasing your click through rate, you don’t necessarily make more money, because maybe the ad attracted the wrong audience.

Other examples would be when split testing a squeeze page. Squeeze page – A with a higher conversion rate might get you less sales than a squeeze page – B with lower conversion rate. This can happen, because the page B was pre selling and the conversion naturally dropped, but in the end it could makes more money.

So, you have to track the end result (money in the bank) to find the true winners.

Yep, well said Liudas. Thanks for commenting.

I have not done split testing, but find a couple of things helpful on other topics you mentioned:

1. to keep my audience engaged, and make sure I am not pulling dead weight, I am purging my lists every 3 months. I pull to see who does NOT open emails regularly and send them “I hate to see you go” type of email with an offer to be engaged (there is an incentive). Some will become active again, even if they do not capitalize on the incentive, while others, if they do not respond, get deleted.

2. the weirdest part that I found is that I get more engagement from my audience through emails (as if they truly do not know to leave comments, although I am constantly educating them about this) and on Facebook. However, in spite of that, my audience is still buying my products. At the end of the day, I guess that is what matters most to a blogger.

So, for me, conversions are much more important then numbers. If I held on to numbers only, my email list would be double the size, but I would rather have a smaller active audience, than a larger unresponsive audience.

Hi Elena.

Thanks for the great comment.

I try to keep my list trimmed down as well. Usually just below the 10,000 mark. Helps to save fees as well!

What would you say has helped your sales the most?

Yes, the fees can be a killer, that is why I am trying to keep my list as “alive” and active as possible. I am getting closer to the 10K mark, so carrying no dead weight is key for sure. In fact, I will soon be re-editing my drip campaign to keep my readership even more engaged.

As for sales, I call it “romancing the customer”. I make a point to talk to my readers when they leave comments, email me or use social media to interact (much like you do here). Some get one of my products or ebooks immediately, while others (“observers”) take their time to see if I am a real person and mean what I promise. They end up becoming the most loyal of customers in the end. I never overpromise and always overdeliver, and keep traditional marketing fluff to the bare mineral.

Also, knowing when to offer certain products has been key for me. While I offer services, I also offer ebooks. The beginning of the year is normally the “Daniel Fast” and now the “Lent Season” traffic that I get, so, I maximize on those and offer an ebook in addition to the free content I offer.

True that. I have never been a great fan of A/B split testing myself. Sure it helps with better open responses; but I have also seen higher unsubscribe rates with few of our clients who consistently depend on the test.

Interesting. What kind of mailing lists?

Well… over the top of head, a client once targeted his email database with the ‘winning subject line’ from his A/B split testing. The subject line was pretty deceptive when compared to what exactly the content was. End result – too many unsubscribes and spam complaints. Pretty huge price for better open rates.

Wow. Fascinating.

Hey Ramsey

Pretty interesting article – I’m not a huge fan of A/B testing personally.

I’m not saying not to do it or that its not a very useful tool, but I personally only test things like headlines and some basic layout formats – there is an art and science to it and I feel that testing sometimes has been given too much air time in online marketing circles.

It can have dramatic results and it can also have unexpected or negative results – I feel its more important to stick to proven basics that fit you best and then from time to time by first deciding what your goals are and then testing your results.

All I can say is I have never had massive differences between any of my A/B tests – sure its good for optimizing but for big changes there is better things to invest your time in other places.

Paul

Hey Peul. 🙂

Yeah I am starting to agree with that. I think it needs to be maybe restricted to purely sales platforms like landing pages where small differences can be huge and are easily tracked.

Its pretty cool that you agree 😀 – it’s one of those things that get talked about over and over in marketing circles but I can see why – people love to hear about data, testing, changes and results.

But realistically getting to actually know your customers will help you increase conversions as you will produce more helpful resources in a format they like, delivered to them in the way that they don’t find objectionable and in fact appreciate.

I agree about the landing pages and sales type pages with a high volume of traffic – as small incremental changes bring in consistent results. I do have to say that those sort of pages have to be approached in a methodotical style which requires planning, clear objectives and probably quite high traffic figures.

Thanks, nice tips.

I really want to try A/B testing sometime on my website but don’t really know what I am expecting and where to strat. 🙁

Start with your opt-in forms.

Great article – I’m doing split testing with my email program now, and this gave me some new ways to look at the data. One thing that really jumped out at me was that I have been tracking unsubscribes and engagement, but I’ve been looking at them the wrong way! I’m going to make some changes to my evaluation metrics to look at the relative rates of engagement and unsubscribes. I would think I need to compare those numbers to the total number of opens to get the best idea of what’s going on, would you suggest any other way of making use of that data? Thanks as always for making me put my thinking cap on!

That’s a really good question. I tend not to worry about unsubscribes too much – I get between 30 and 50 for every email that I send out. I think it’s better than having them mark you as spam. Not sure how to make more real use of the data though.

Hmm is anyone else encountering problems with the images on this blog loading?

I’m trying to figure out if its a problem on my end or if

it’s the blog. Any feedback would be greatly appreciated.